The study of technological ethics can be divided into two distinct parts: the ethics of applied technology and the study of technology and society. Over the past twenty-five years, the vast majority of writing on technology has involved the former: ethical reflections on new possibilities from the latest technology. For example, now that a computer can do X, what are the ethical implications of X? Self-driving cars, general-application robots, medical robotics, smartphones, smart bombs, drones, social media, disinformation, and personal artificial-intelligence applications (Siri, Alexa, etc.) fall into this category.

The latter category, studies of society and technology, covers a host of issues related to the production, development, and implementation of new technologies. This field includes topics such as disparities in digital access; the ecological effects of data mining; diversity, equity, and inclusion among employees at tech companies; and racial, gender, and other biases built into technology (for example, Google understands men’s voices better and facial-recognition technology sees white faces better).

Many excellent studies have already appeared in this second category, such as Ruha Benjamin’s Race After Technology, Safiya Noble’s Algorithms of Oppression, Caroline Criado Perez’s Invisible Women, the Netflix documentary Coded Bias, and Cathy O’Neil’s Weapons of Math Destruction. These studies are largely aimed at a lay audience on the theory that public awareness—and public actions—may be the only effective way to change common practices of the tech giants. Coded Bias is a great example of this work, chronicling Joy Buolamwini’s journey from discovering racial bias in facial recognition as a researcher at MIT Media Lab to testifying in a congressional hearing with Alexandria Ocasio-Cortez.

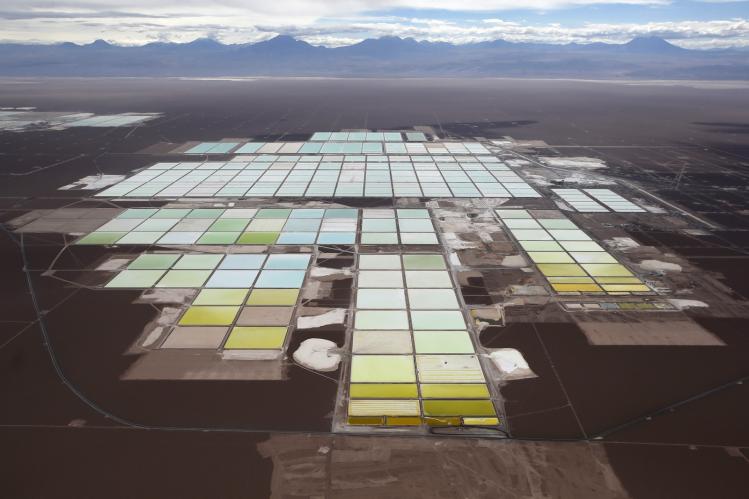

Kate Crawford’s Atlas of AI: Power, Politics, and the Planetary Costs of Artificial Intelligence joins this group of essential research for anyone interested in ethical problems in the development and implementation of new technology. But the book stands out in its privileging of the physical over the technological. It is more a book about people, places, and things than about ones and zeros, intended to make us struggle with the assumption that there are technological solutions to all of life’s problems. Refreshingly, Crawford is no Luddite. She does not ask us to delete our Facebook accounts or stop using Google, as she understands the vast power imbalances between the tech giants and the individual. The book, instead, is a magnifying glass on abuses and possible abuses, a lens that changes the focus from the latest Tesla or iPhone and toward the hands that carved the earth for the nickel and the now-empty lithium mines that are just as nonrenewable as coal.

Crawford divides the book into surveys on the major issues we face in technology today: the environmental impact of technology, the labor practices of Amazon (and others), the problems of massive data collection, the inherently biased nature of facial recognition, the false promises of affective (emotion-based) AI, and the problems caused by the utilization of AI by local and national governments. This litany of alarm bells warns us about the ways the tech community, under the guise of constructing the latest and greatest tech, systemically devalues human life and the health of the earth.

Crawford writes that she uses the term AI (instead of the industry term “machine learning”) not because she believes in the forthcoming development of a superintelligent sentient piece of technology, but because despite all evidence to the contrary, the public imagination is firmly enamored by the possibilities of an all-knowing AGI—an artificial general intelligence. This construct has provoked, frightened, and enticed us ever since digital technology was developed in the mid-twentieth century. Innumerable examples exist, from Avengers: Age of Ultron to 2001: A Space Odyssey to Westworld and the more recent Raised by Wolves. AI in art tends to mimic the world within which it is constructed: while AI in The Matrix revealed the dark side of the capitalistic growth of the late 1990s, Gene Roddenberry’s Star Trek showcased the opposite with the character Data, promoting a personhood of AI as the optimistic outcome of artistic and technological innovation. The cylons in Battlestar Galactica, with its themes of espionage and nuclear apocalypse, were originally created at the height of the Cold War. The character of the Director from Netflix’s Travelers, on the other hand, speaks to our current moment: it is an AI made by a desperate humanity trying to correct the sins of the past, like the climate crisis, unceasing wars, and deadly pandemics.

Portrayals of AI in art vary because artificial intelligence in this form simply does not exist, and no one knows when or if it will ever be born. While computers can now beat anyone at chess, drive cars, and pilot spaceships, they are still machines, not sentient intelligences. AI today, writes Crawford, “is neither artificial nor intelligent.” What we call artificial intelligence—apps that decode our voices, find us products on Amazon, give us directions, play our favorite songs, search the internet for keywords, and try to predict the weather—is, in fact, quite physical. “Artificial intelligence is both embodied and material, made from natural resources, fuel, human labor, infrastructures, logistics, histories, and classifications.”

As much as the individual chapters are important, they cannot cover every issue. For example, Crawford paints with a wide brush when she discusses racial bias and misogyny, condemning many common practices within the tech community without getting in-depth about either. And the book has some omissions. While Crawford rightly maligns the dehumanizing labor practices of Amazon, she does not mention that these practices are not unique to the tech world.

Nevertheless, Crawford’s narrative exploration of ethical issues takes AI from the world of Star Trek and makes it thick, human, and visceral. She presents AI not as a marvel of the future, but as a false promise that brings with it all kinds of problems that can be easy to ignore when we see a driverless car. Technological progress has been and will likely continue to be remarkable, but at what cost?

“When AI’s rapid expansion is seen as unstoppable,” Crawford writes, “it is possible only to patch together legal and technical restraints on systems after the fact: to clean up datasets, strengthen privacy laws, or create ethics boards.” But these responses have always been incomplete and partial. A new mindset, a proper eschatology perhaps, is needed. “How can we intervene to address interdependent issues of social, economic, and climate injustice?... Where does technology serve that vision? And are there places where AI should not be used, where it undermines justice?”

Crawford’s cries for justice echo Pope Francis’s encyclical Laudato si’. “Science and technology are not neutral,” he writes. “From the beginning to the end of a process, various intentions and possibilities are in play and can take on distinct shapes. [We need] to appropriate the positive and sustainable progress which has been made, but also to recover the values and the great goals swept away by our unrestrained delusions of grandeur.”

Atlas of AI is not a book of theology, but I hope it finds its place in a new canon of studies of technology and society that can refocus our minds and hearts toward human loss, ecological disasters, and social awareness in an age of boundless promises of a technological utopia.

Atlas of AI

Power, Politics, and the Planetary Costs of Artificial Intelligence

Kate Crawford

Yale University Press

$28 | 336 pp.

Please email comments to [email protected] and join the conversation on our Facebook page.

Previous Story

Asking the Right Questions

Next Story

Vanities Come to Dust